Card Layout and Photos

What’s that hiding in its electrostatic bag.. Don’t be shy we won’t hurt you. The GTX 680 actually came to me in the sexy packaging below, but I had to leave it in San Francisco when I left due to limited space in my bags.

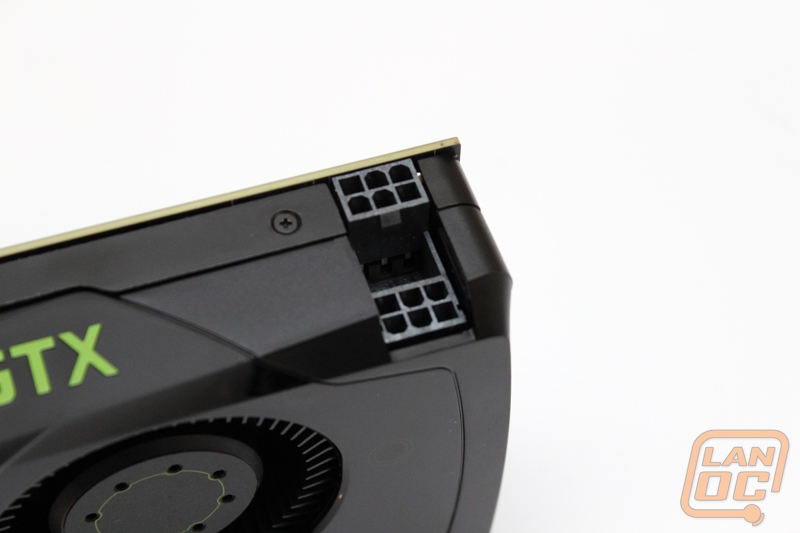

Here are full cards shots of the GTX 680, even in a reference design this is a good looking card. I love the use of the raised Geforce GTX logo up top with green highlighting it even more. I hope this carries over into other cards based on the reference design. It’s nice to be able to show off what you have inside through your side panel window.

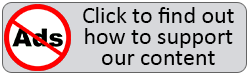

Being a flagship card this is no surprise really, but the GTX 680 is equipped with dual SLI connections. This means 3 and 4 way SLI are possible depending of the motherboard.

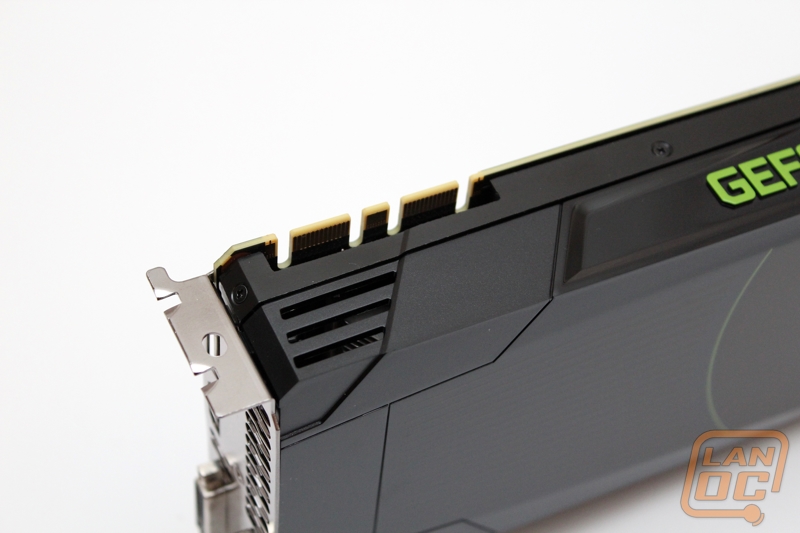

I have mentioned it before in this review already but here is a shot of the dual six pin power connections for the GTX 680. This is a very unique design but there is one problem that I ran into. The second connection is facing in meaning you have to have small fingers to poke down to the lower plug to hold the unlock tab on your 6 pin power connection to remove the cable. Nvidia mentioned that this layout allows them more room on the PCB for improvements and it also allows for the cards fan to be better placed.

For connections here on the reference design we have two DVI, one HDMI, and a full sized display port connection. Nvidia packs as much ventilation as possible into the design also. They have pushed the vents almost up against the DVI port and they have also added a couple vents below the HDMI plug.

The side of the reference cooler has a nice indented section around the GPU fan; this will give the card room to breathe when it is packed up against other cards or with other devices. We have run into cards in the past without this breathing room and it can get a little too hot. It’s nice that NVidia has kept that in mind.

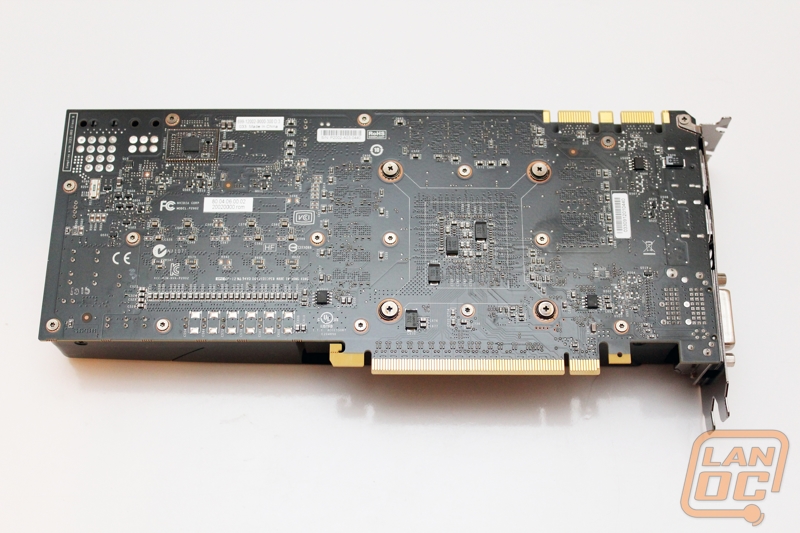

Here is the full back of the PCB. As you can see the card does not come with a backplate but they did got with a nice black PCB. The most noticeable thing that stands out to me on the PCB here is the collection of power connections up in the top left corner. Before NVidia said that they went with the stacked power connection design but you can actually see that they could have gone with ether connection (ignoring any cooling things to consider). They also have included enough connections for an 8 pin if needed. Are these left overs from a late design change or are we seeing power connections that could be used in future cards based on the same PCB? Only time will tell.

Here are a few shots of the GTX 680 paired up with the card it is replacing, the GTX 580. As you can see the new GTX 680 is actually a little bit shorter. Having that Geforce GTX embossed on the top of the card is a nice touch, especially when next to the GTX 580.

You have all seen and heard the rumors for months now about Nvidia’s upcoming GPU code named Kepler. When we got the call that Nvidia was inviting editors out to San Francisco to show us what they have been working on we jumped at the chance to finally put the rumors to rest and see what they really had up their sleeve. Today we can finally tell you all the gory details and dig into the performance of Nvidia’s latest flagship video card. Will it be the fastest single GPU card in the world? We finally find out!

You have all seen and heard the rumors for months now about Nvidia’s upcoming GPU code named Kepler. When we got the call that Nvidia was inviting editors out to San Francisco to show us what they have been working on we jumped at the chance to finally put the rumors to rest and see what they really had up their sleeve. Today we can finally tell you all the gory details and dig into the performance of Nvidia’s latest flagship video card. Will it be the fastest single GPU card in the world? We finally find out!